Report Reveals Toxic Telegram Group Generating X-Rated AI-Generated Fake Images of Taylor Swift

A Telegram group created explicit fake images of Taylor Swift using AI, according to a report. The group bypassed safeguards in Microsoft's AI tool, Designer, to generate these images by cleverly altering their prompts.

Despite tackling some reports, Microsoft has yet to confirm its tools were used but is working to prevent such misuse. The fake images, originally circulated on Telegram, have spread across social media platforms, including a site formerly known as Twitter, where they have generated significant views and controversy.

Microsoft is investigating the issue, with a representative emphasizing their commitment to responsible AI use and the prohibition of creating adult content using their tools. Meanwhile, on the site formerly known as Twitter, efforts are underway to remove the images, with reminders that non-consensual nudity is against policy.

Taylor Swift's situation has highlighted the broader issue of deepfake pornography, prompting legal and regulatory discussions. In the U.S., proposed legislation aims to criminalize deepfake porn, while the UK's Online Safety Act imposes heavy fines for illegal content but raises privacy concerns due to the scanning of private messages.

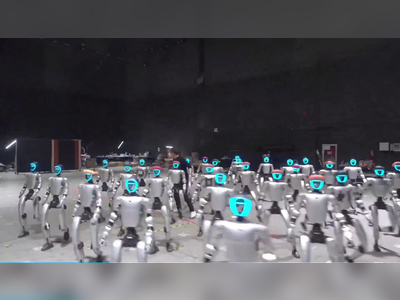

AI companies are taking steps to limit the production of not safe for work (NSFW) content, though creative users still find ways around these restrictions. Social media platforms are struggling to keep up with the rapid dissemination of such images, underscoring the need for more robust solutions to address the proliferation of deepfake pornography.

Microsoft is investigating the issue, with a representative emphasizing their commitment to responsible AI use and the prohibition of creating adult content using their tools. Meanwhile, on the site formerly known as Twitter, efforts are underway to remove the images, with reminders that non-consensual nudity is against policy.

Taylor Swift's situation has highlighted the broader issue of deepfake pornography, prompting legal and regulatory discussions. In the U.S., proposed legislation aims to criminalize deepfake porn, while the UK's Online Safety Act imposes heavy fines for illegal content but raises privacy concerns due to the scanning of private messages.

AI companies are taking steps to limit the production of not safe for work (NSFW) content, though creative users still find ways around these restrictions. Social media platforms are struggling to keep up with the rapid dissemination of such images, underscoring the need for more robust solutions to address the proliferation of deepfake pornography.